NetSec Spotlight: AI Access Security

In the previous post, I introduced Data Loss Prevention (DLP) as a core network security capability for identifying sensitive information and enforcing policy to prevent exposure.

This post examines AI Access Security and how the NetSec platform applies content-aware inspection and policy enforcement to govern AI-driven traffic. AI Access Security applies these controls natively, and can also align with wider DLP policies when both are deployed.

AI tools are being adopted faster than almost any other technology, and users are already:

- Copying sensitive data into prompts

- Connecting AI tools to internal systems

- Using browser-based, API-based, and embedded AI features interchangeably

AI-driven interactions span browsers, APIs, SaaS integrations, and internal data sources, often occurring within encrypted and otherwise sanctioned traffic flows.

From a network security perspective, this represents a new access pattern that challenges traditional application-centric controls:

- How do you distinguish AI interactions from standard encrypted application traffic?

- How do you detect AI features embedded within otherwise sanctioned SaaS applications?

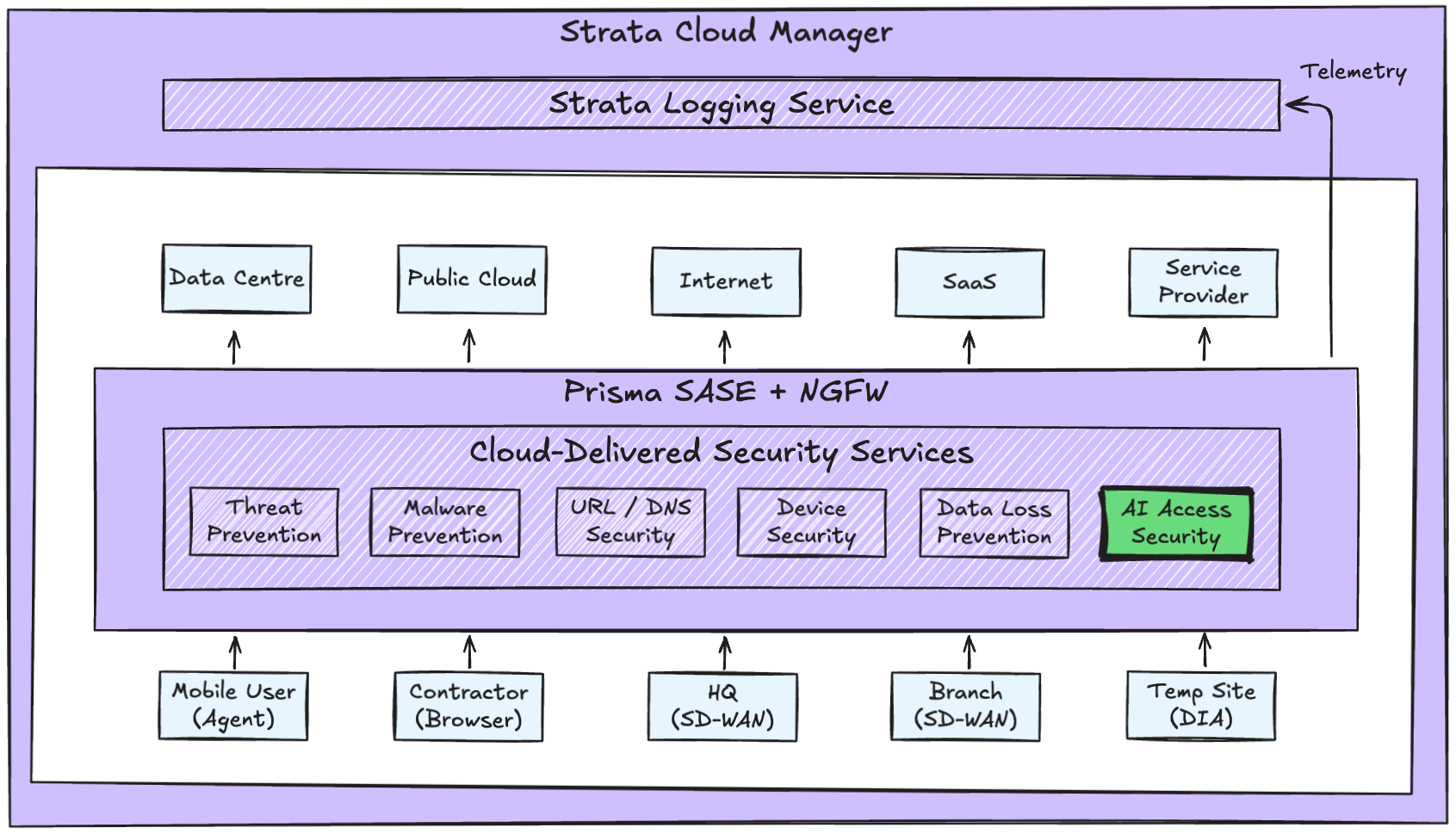

Platform Context

AI Access Security is a shared inspection capability delivered through Cloud-Delivered Security Services to enable the safe adoption of GenAI. It extends the inspection layer within the existing platform architecture, rather than introducing a new enforcement tier or control plane.

Inspection of GenAI interactions and prompts can happen inline at the network layer or directly within the browser session with the Enterprise Browser. Since the platform is already inspecting traffic, new GenAI-specific policies can be applied in parallel without any further processing overhead.

Strata Cloud Manager (SCM) continues to provide centralised management, policy, and visibility with the added context of GenAI applications. This means:

- Policy authority remains centralised

- Enforcement remains distributed

- Telemetry remains normalised within the same data model

Operational Capabilities

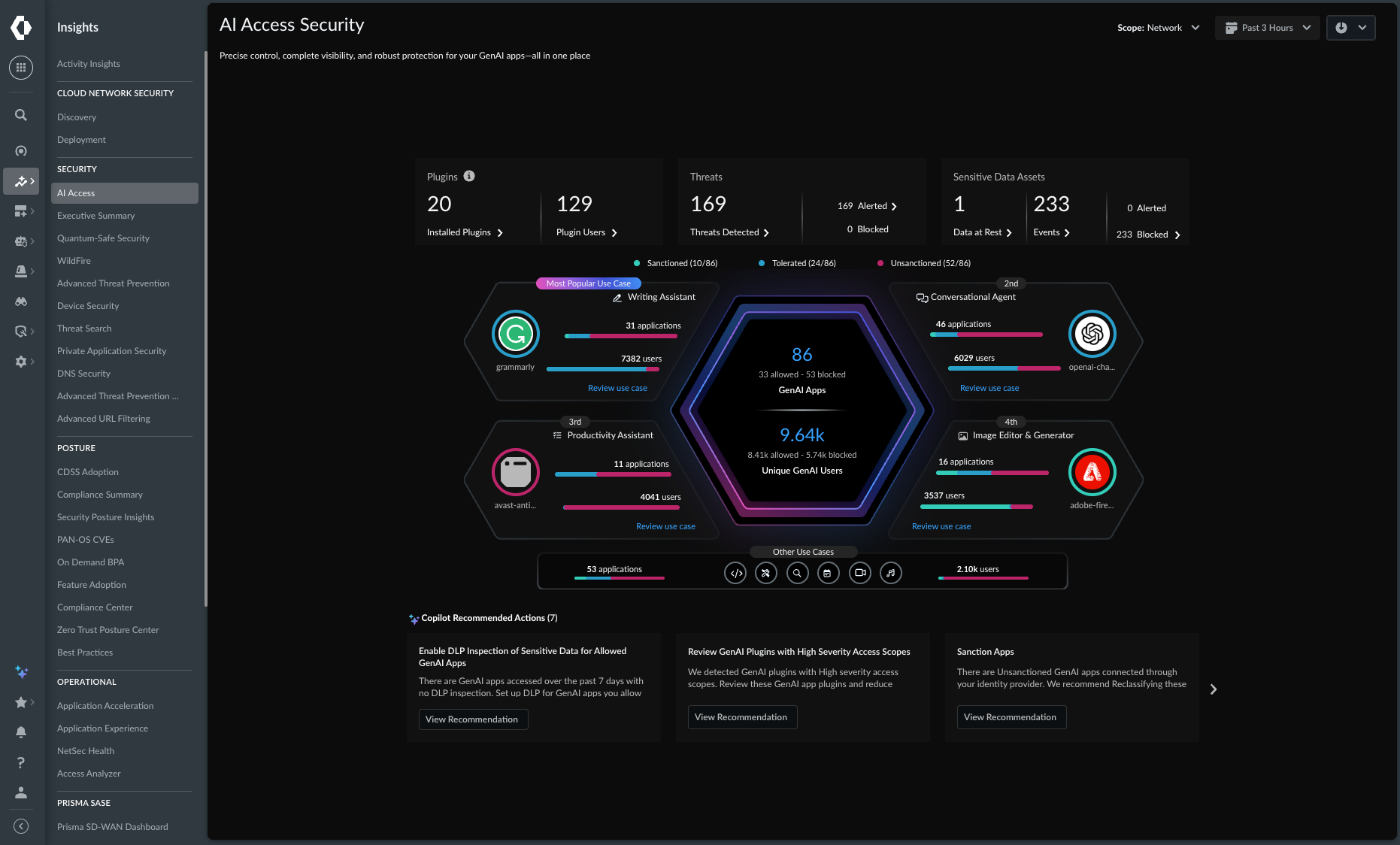

AI Access Security identifies GenAI applications and interactions, along with their associated risk. It prevents shadow AI sprawl, applies granular access policies, and integrates DLP capabilities into prompts and responses.

AI Access Security moves inspection and enforcement beyond application and user context. Operationally, it provides:

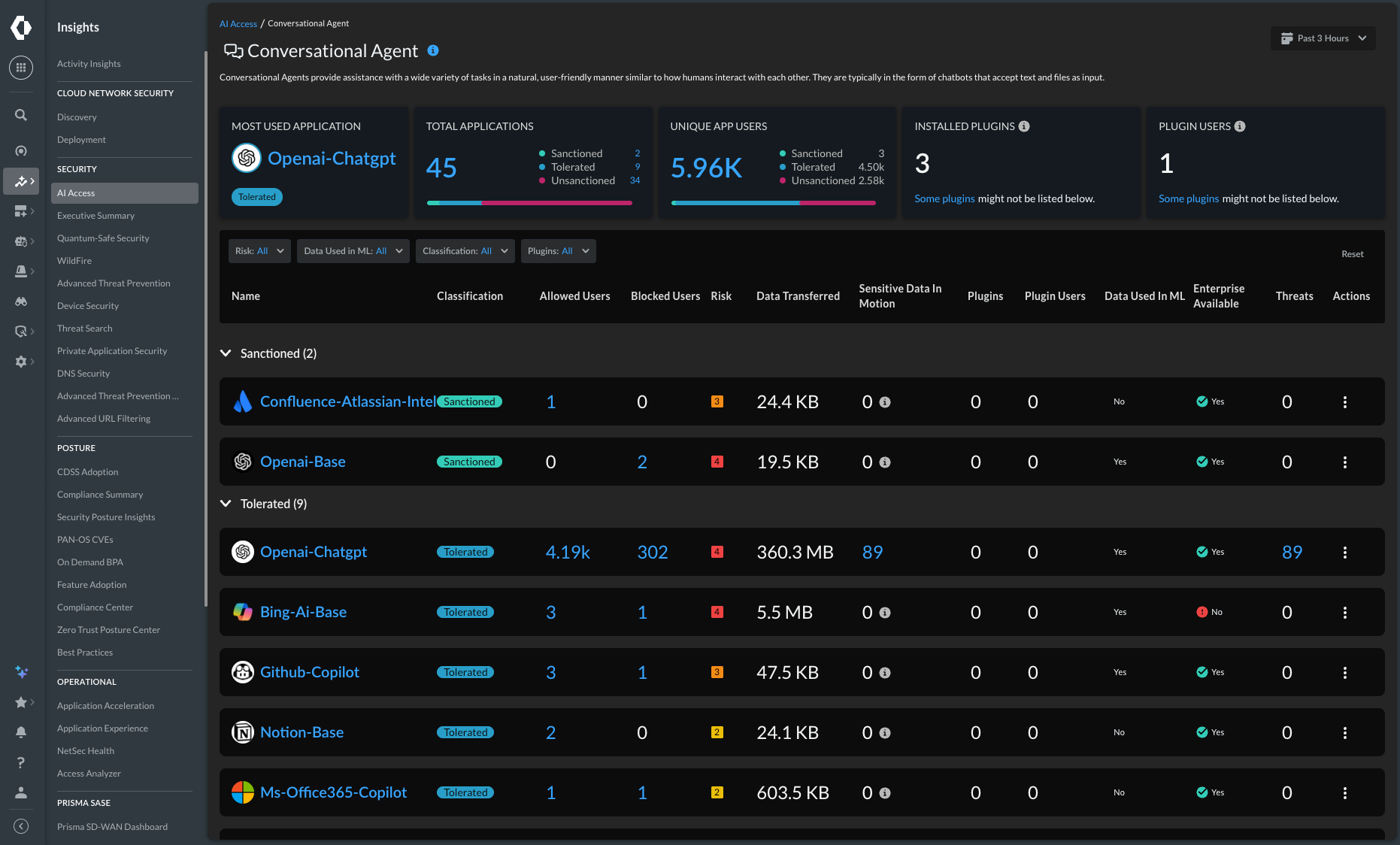

- Automatic identification of GenAI applications, including browser-based, API-driven, and AI features embedded inside SaaS platforms

- Detection of AI usage within otherwise sanctioned applications

- Inline inspection of prompts and responses without redirecting traffic to an external proxy tier

Policy decisions are made based on AI-specific risk signals - including data handling posture, model training behaviour, identity characteristics, and compliance alignment.

In practice, this means enforcing granular controls such as:

- Blocking data uploads while allowing query-only usage

- Restricting high-risk AI applications

- Applying DLP policies to prompt content

- Limiting AI access by user, device posture, or risk profile

Importantly, inspection is applied inline and continuously, using the same enforcement model as the rest of the platform. This approach extends inspection depth within the platform, rather than being a reactive bolt-on tool.

Operational Scenario

Scenario: User copies internal financial data into a public AI chatbot.

Without AI-specific inspection:

- Traffic may be decrypted and inspected inline, or it may remain encrypted and outside effective inspection

- Application or encrypted web traffic identified

- No prompt-level inspection is applied

- Sensitive data exposure goes undetected

With AI Access Security:

- Traffic is decrypted and inspected inline

- AI application is identified

- Prompt-level inspected is applied inline

- DLP policy is evaluated against user and device context

- Upload is blocked or restricted according to policy

Platform Outcomes

When AI Access Security is implemented as part of a NetSec platform, the outcomes include:

- AI usage governed without introducing a parallel security stack

- Clear visibility into AI usage patterns

- Consistent data protection across AI and non-AI traffic

- Policies that scale as AI capabilities evolve

- No additional operational domain to manage